- Principal Investigator:

- Santani Teng

What is echolocation? Sometimes, the surrounding world is too dark and silent for typical vision and hearing. This is true in deep caves, for example, or in murky water where little light penetrates. Animals living in these environments often have the ability to echolocate: They make sounds and listen for their reflections. Like turning on a flashlight in a dark room, echolocation is a way to illuminate objects and spaces actively using sound.

Sonar for people

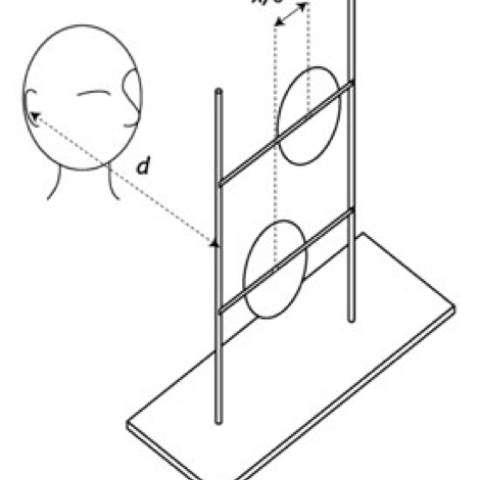

Using tongue clicks, cane taps or footfalls, some blind humans have demonstrated an ability to use echolocation (also called sonar, or biosonar in living organisms) to sense, interact with, and move around the world. What information does echolocation afford its practitioners? What are the factors contributing to learning it? How broadly is it accessible to blind, and sighted, people? Continuing past work from MIT and UC Berkeley, at Smith-Kettlewell I am investigating these and other questions: what spatial resolution is available to people using echoes to perceive their environments, how well echoes promote orientation and mobility in blind persons, and how this ability is mediated in the brain. Previous work has shown that sighted blindfolded people can readily learn some echolocation tasks with a small amount of training, but that blind practitioners possess a clear expertise advantage, sometimes distinguishing the positions of objects to an angular precision of just a few degrees — about the width of a thumbnail held at arm's length. Identifying the basic perceptual factors that contribute to this performance could help what might let a novice echolocate like an expert would clarify our understanding of human perception and be a valuable resource for Orientation & Mobility instructors promoting greater independent mobiity in blind travelers.

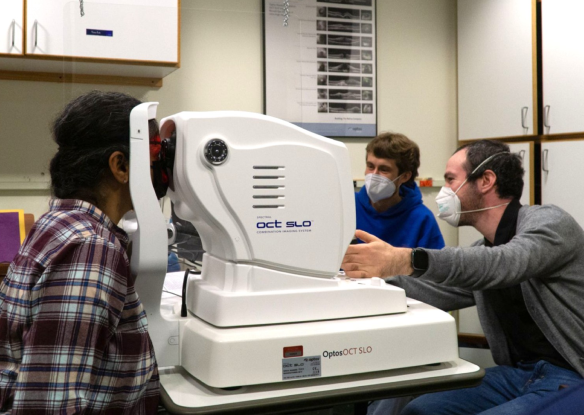

Assisted echolocation

Inspired by both human and non-human echolocators, and driven by advances in computing technology, we are investigating artificial echoes as a perceptual and travel aid. For example, ultrasonic echoes, useful to bats but silent to humans, carry higher-resolution information than audible echoes. An assistive device could make the perceptual advantages of ultrasonic echolocation available to human listeners. Previous work showed that untrained listeners can quickly make spatial judgments using the echoes, and we are working on making artificial echolocation more perceptually useful as well as more ergonomic and convenient.

Neural mechanisms

Echolocation relies on making and perceiving sounds. But in blind expert practitioners, it activates brain regions typically used for vision. Using magnetoencephalography (MEG), electroencephalography (EEG), and magnetic resonance imaging (MRI), this recent line of inquiry seeks to understand how the brain process echo information, separates it from other incoming sounds, and uses it to guide behavior.