Abstract

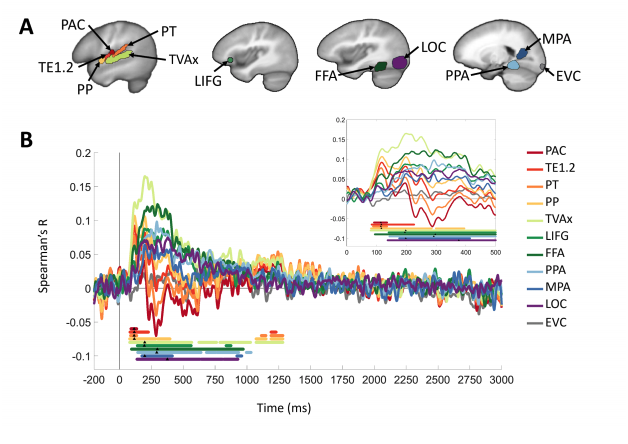

As the human brain transforms incoming sounds, it remains unclear whether semantic meaning is assigned via distributed, domain-general architectures or specialized hierarchical streams. Here we show that the spatiotemporal progression from acoustic to semantically dominated representations is consistent with a hierarchical processing scheme. Combining magnetoencephalography (MEG) and functional magnetic resonance imaging (fMRI) patterns, we found superior temporal responses beginning 80 ms post-stimulus onset, spreading to extratemporal cortices by 130 ms. Early acoustically-dominated representations trended systematically toward semantic category dominance over time (after 200 ms) and space (beyond primary cortex). Semantic category representation was spatially specific: vocalizations were preferentially distinguished in temporal and frontal voice-selective regions and the fusiform face area; scene and object sounds were distinguished in parahippocampal and medial place areas. Our results are consistent with an extended auditory processing hierarchy in which acoustic representations give rise to multiple streams specialized by category, including areas typically considered visual cortex.Competing Interest StatementThe authors have declared no competing interest.

Journal

Year of Publication