Home / Events / Scaling up accessible tactile maps and 3D models with CamIO

Scaling up accessible tactile maps and 3D models with CamIO

Event Date:

Monday, October 27th, 2025 – 5:30 PM to 7:00 PM

Speaker:

James Coughlan, Mykhailo Boiko, Olha Stepanchenko

Host:

James Coughlan & Natela Shanidze

Abstract

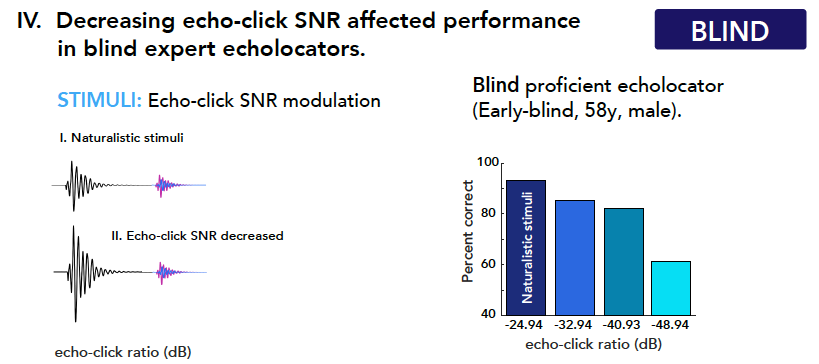

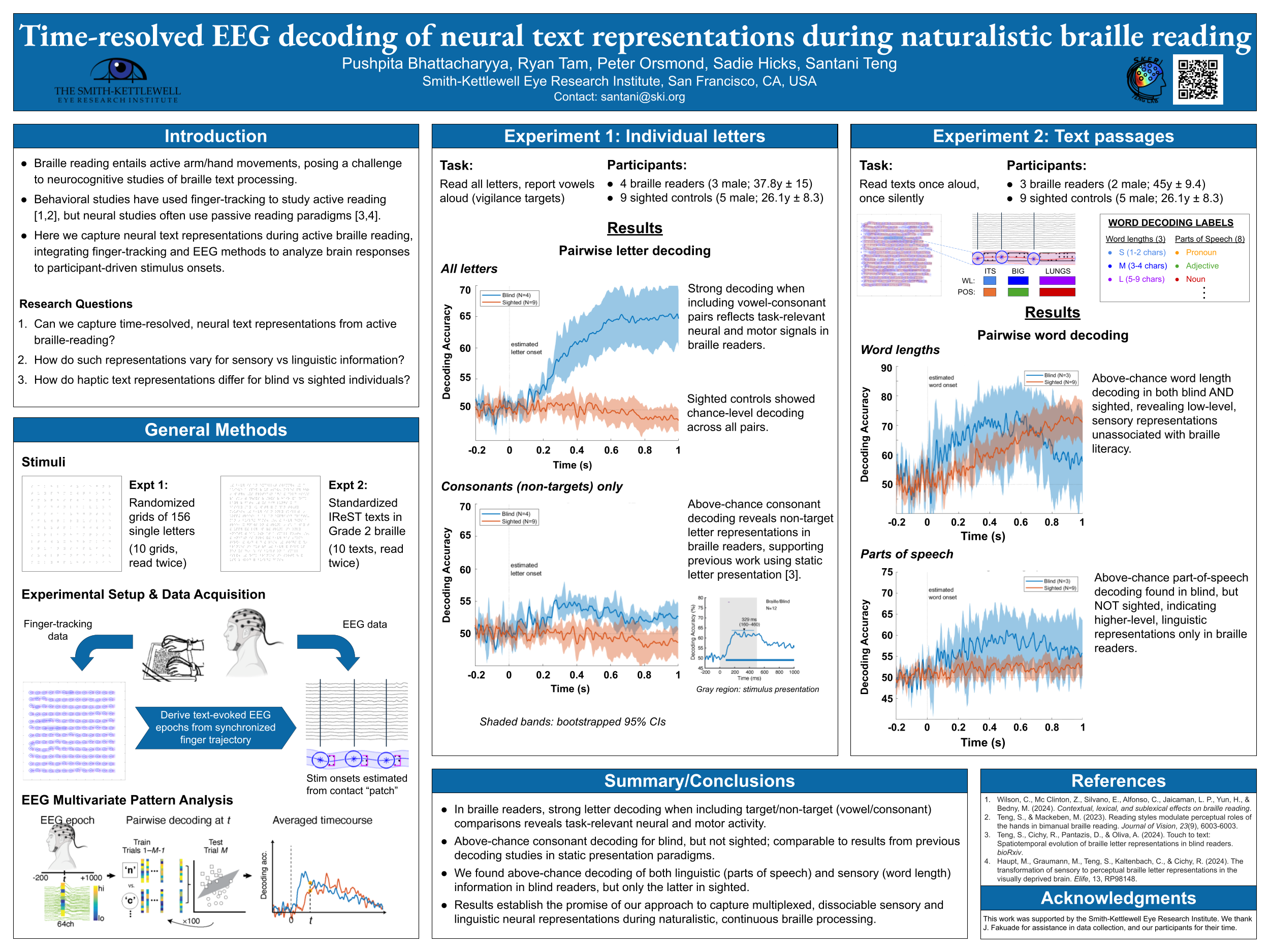

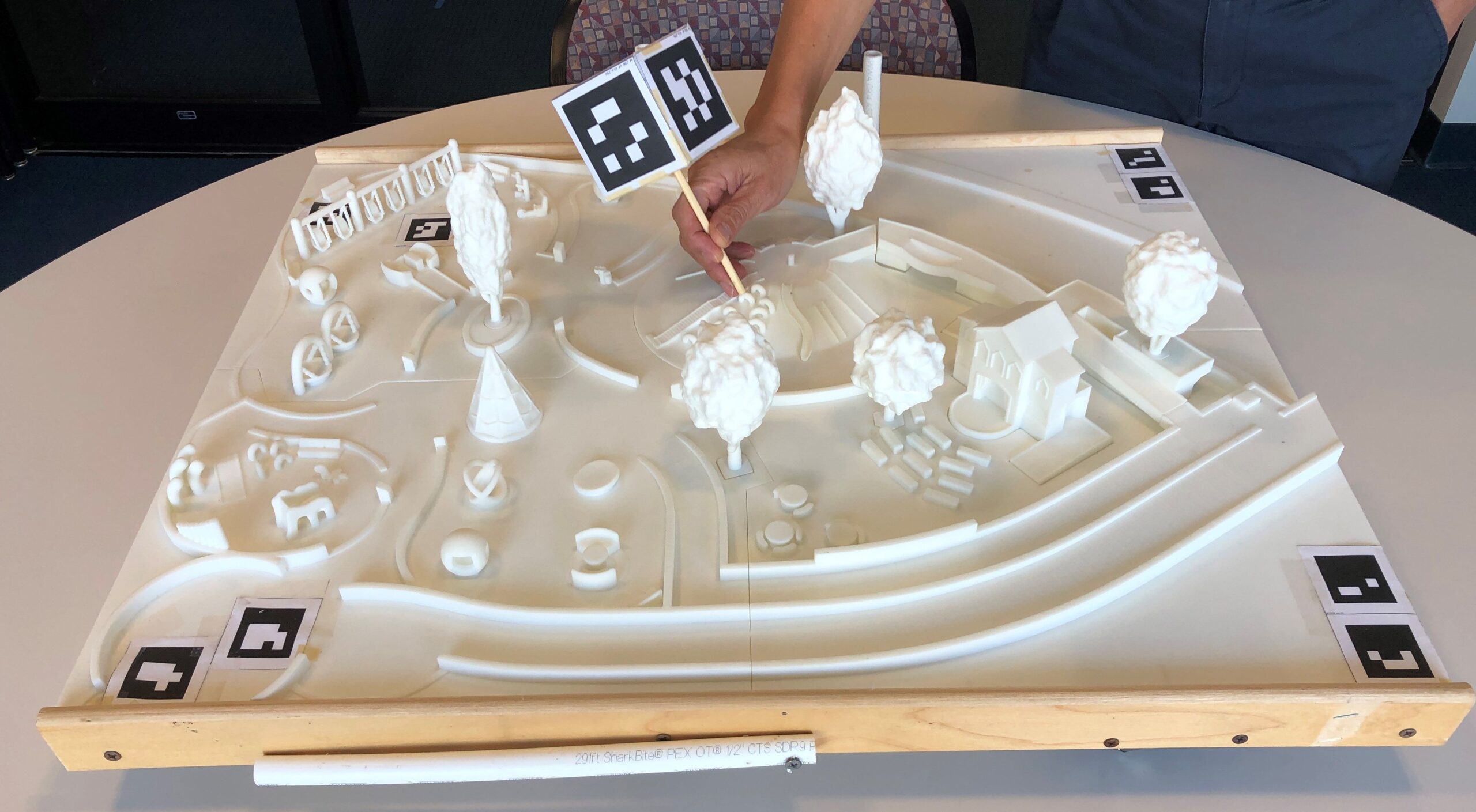

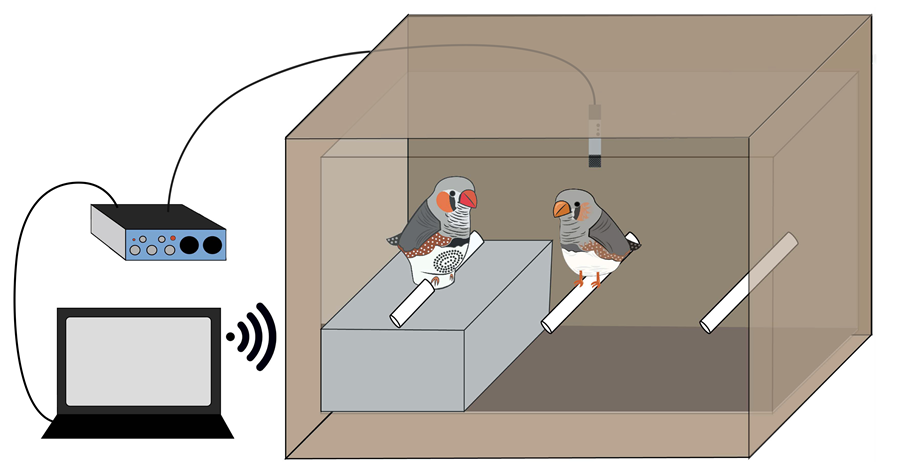

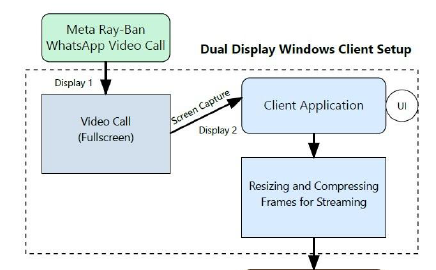

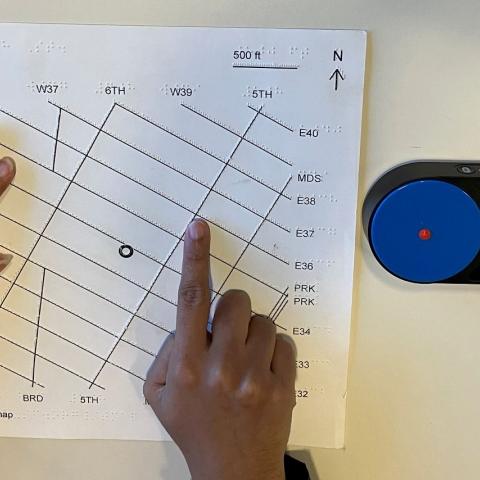

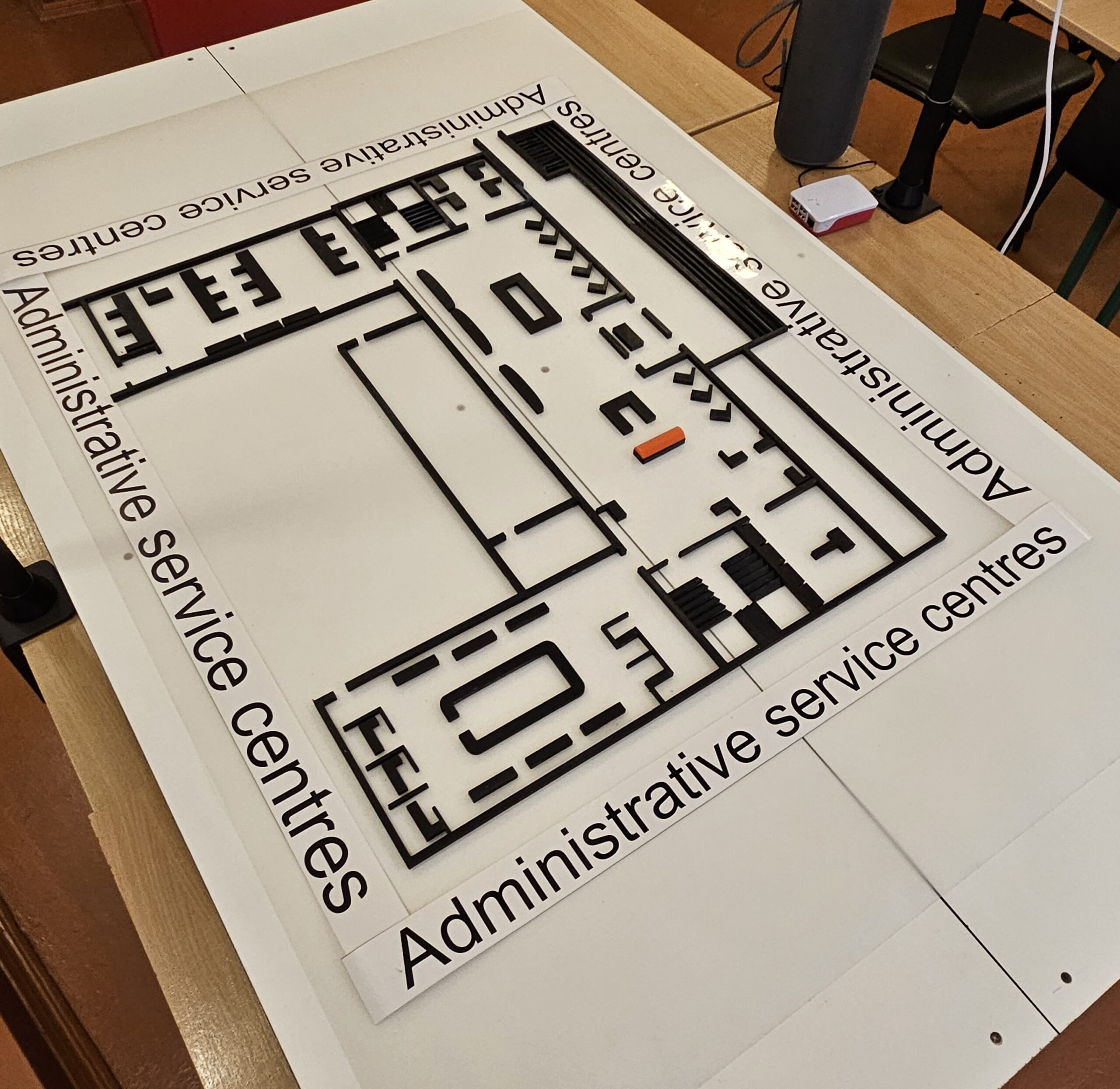

In the US alone, approximately six million people have low vision, of whom about one million are blind. These numbers are growing as the population ages. For the blind and low vision (BLV) population, tactile materials are indispensable for understanding spatial information, such as tactile maps, relief maps, tactile infographics (e.g., bar charts) and 3D educational models (e.g., a plant cell model). However, it’s challenging for BLV individuals to understand the meaning of this spatial information; while braille labels can provide some information, braille letters take a lot of space and not everyone reads braille. To address this problem, the CamIO (short for “Camera Input-Output”) system was created to enable blind and visually impaired individuals to interact with physical objects (such as documents, maps, devices, and 3D models) by providing real-time audio feedback based on the location that they are touching. We describe recent developments of CamIO, including a public installation in Palo Alto, the implementation of an AI-based conversational interface, and international collaborations with the University of Milan in Italy and Rivne Applied College of Information Technologies in Ukraine. Colleagues in Ukraine have begun implementation in Rivne, with the goal of broadening access to this technology across Ukraine. During this in-person event, guests will hear about CamIO, have an opportunity to try it, and meet the lead engineers from Rivne who will be visiting Smith-Kettlewell as part of our collaboration.

Special Guests: Drs. Mykhailo Boyko and Olha Stepanchenko of Rivne Applied College of Information Technologies

To attend the event please RSVP here.

Our Speakers:

James M. Coughlan is a Senior Scientist and Director of the Rehabilitation Engineering Research Center on Blindness and Low Vision at the Smith-Kettlewell Eye Research Institute. His main research focus is the use of computer vision and sensor technologies to facilitate greater accessibility of the physical environment for blind and visually impaired persons.

is a Senior Scientist and Director of the Rehabilitation Engineering Research Center on Blindness and Low Vision at the Smith-Kettlewell Eye Research Institute. His main research focus is the use of computer vision and sensor technologies to facilitate greater accessibility of the physical environment for blind and visually impaired persons.

Mykhailo Boiko is the Deputy Director and Lecturer at Rivne Applied College of IT and a Scientist at EOS Data Analytics. With nearly ten years’ experience in software engineering, data analytics, and edtech, his research centers on computer science and biophysical modeling. His current interests include interdisciplinary innovation and technology transfer between academia and industry.

Mykhailo Boiko is the Deputy Director and Lecturer at Rivne Applied College of IT and a Scientist at EOS Data Analytics. With nearly ten years’ experience in software engineering, data analytics, and edtech, his research centers on computer science and biophysical modeling. His current interests include interdisciplinary innovation and technology transfer between academia and industry.

Olha Stepanchenko is the Director of the Rivne Applied College of Information Technologies, Executive Director of the Rivne IT Cluster, and an Associate Professor. Her work centers on industry-academia partnerships and practice-oriented programs – micro-credentials, work-based learning, and startup/reskilling tracks for youth and veterans. She is currently scaling cluster-driven projects in Rivne to position the college as a regional innovation hub.

Olha Stepanchenko is the Director of the Rivne Applied College of Information Technologies, Executive Director of the Rivne IT Cluster, and an Associate Professor. Her work centers on industry-academia partnerships and practice-oriented programs – micro-credentials, work-based learning, and startup/reskilling tracks for youth and veterans. She is currently scaling cluster-driven projects in Rivne to position the college as a regional innovation hub.

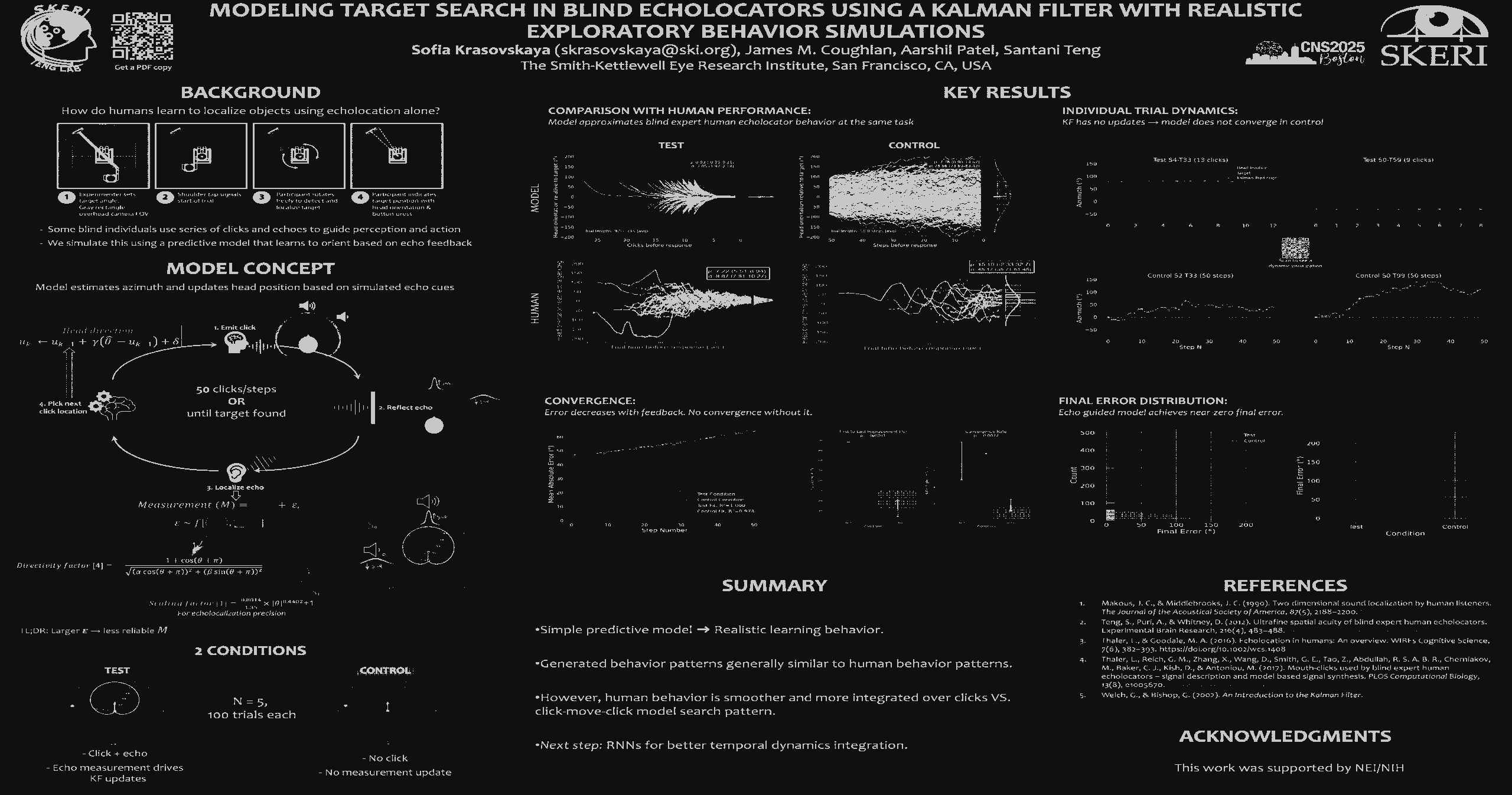

Publications

Projects

- Active

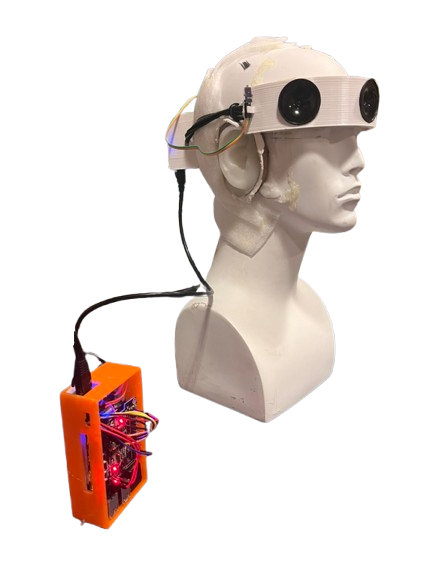

CamIO

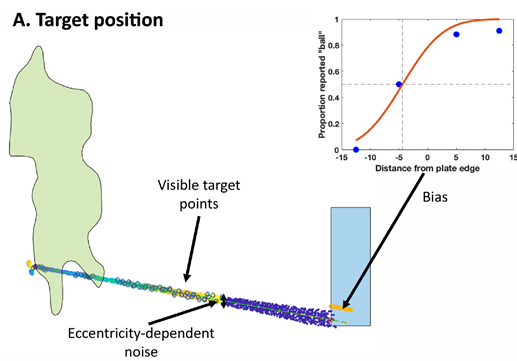

CamIO (short for “Camera Input-Output”) is a system to make physical objects (such as documents, maps, devices and 3D models) accessible to blind and visually impaired persons, by providing real-time audio feedback in response to the location on an object that the user is touching. CamIO currently works on iOS using the built-in camera and an inexpensive hand-held stylus, made out of materials such as 3D-printed plastic, paper or wood.

See a short video demonstration of CamIO here , showing how the user can trigger audio labels by pointing a stylus at “hotspots” on a 3D map of a playground. See…

CamIO Hands

This project builds on the CamIO project to provide point-and-tap interactions allowing a user to acquire detailed information about tactile graphics and 3D models.

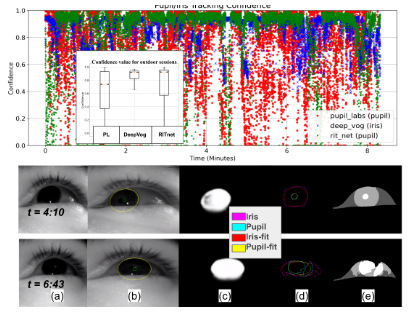

The interface uses an iPhone’s depth and color cameras to track the user’s hands while they interact with a model. When the user points to a feature of interest on the model with their index finger, the system reads aloud basic information about that feature. For additional information, the user lifts their index finger and taps the feature again. This process can be repeated multiple times to access additional levels of…

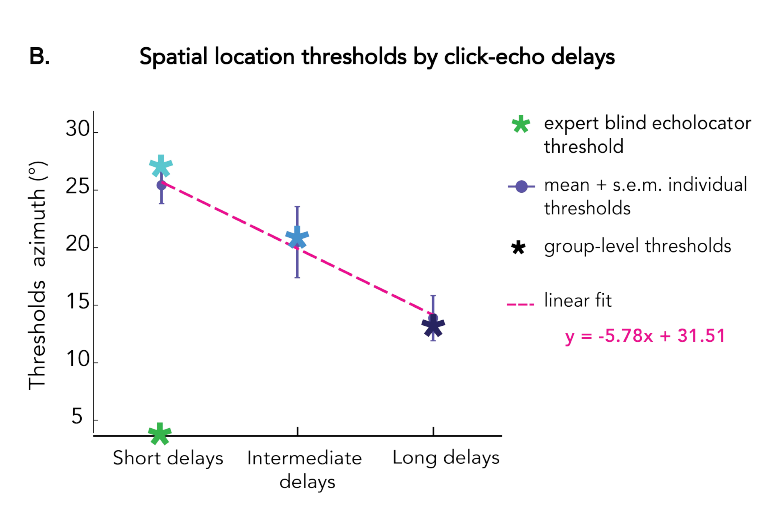

MapIO: a Gestural and Conversational Interface for Tactile Maps

For individuals who are blind or have low vision, tactile maps provide essential spatial information but are limited in the amount of data they can convey. Digitally augmented tactile maps enhance these capabilities with audio feedback, thereby combining the tactile feedback provided by the map with an audio description of the touched elements. In this context, we explore an embodied interaction paradigm to augment tactile maps with conversational interaction based on Large Language Models, thus enabling users to obtain answers to arbitrary questions regarding the map. We analyze the type of…

Labs

- Coughlan LabPrincipal Investigator:The goal of our laboratory is to develop and test assistive technology for blind and visually impaired persons that is enabled by computer vision and other sensor technologies.

Centers

- Rehabilitation Engineering Research CenterThe Center's research goal is to develop and apply new scientific knowledge and practical, cost-effective devices to better understand and address the real-world problems of blind, visually impaired, and deaf-blind...