Event Date:

Thursday, January 7th, 2016 – 12:00 PM to 1:00 PM

Abstract

Presenter: Jeremy Badler, Ph.D.

Playable Innovative Technology Lab,

Northeastern University

Host: Stephen J. Heinen, Ph.D.

Abstract

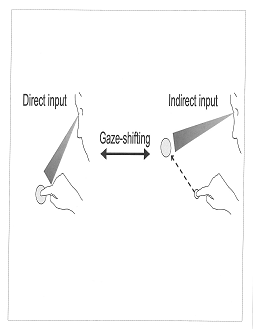

Virtual environments (VE) are increasingly used for training, therapeutic and entertainment purposes. In the physical world of eye movements, head movements, and locomotion operate in synchrony to orient gaze toward objects or scenes of interest. However, their characterization in VE is just beginning to be studied. In VE a mouse is often used as a proxy for head movements, orienting the camera towards the desired view direction, while keyboard arrow keys simulate locomotion. Using eye tracking in conjunction with movement and camera view logging, we are characterizing gaze behavior under such virtual conditions. We have found anticipatory fixations and gaze shifts that precede mouse actions, such as navigation and scanning movements, and are working toward developing a predictive model for navigation based on gaze. I’ll also discuss the implications of our research with regard to post-traumatic stress disorder (PTSD), where virtual reality therapy is increasingly used as a treatment. Since PTSD patients also show characteristic differences in eye movement behavior such as increased threat attention, a better understanding of eye movements in VE could potentially yield additional diagnostic markers or increase treatment efficacy.