Principal Investigator:

Contact Information:

Email: coughlan@ski.org

Mobile Phone: (415) 345-2146

2318 Fillmore St.

San Francisco, CA 94115

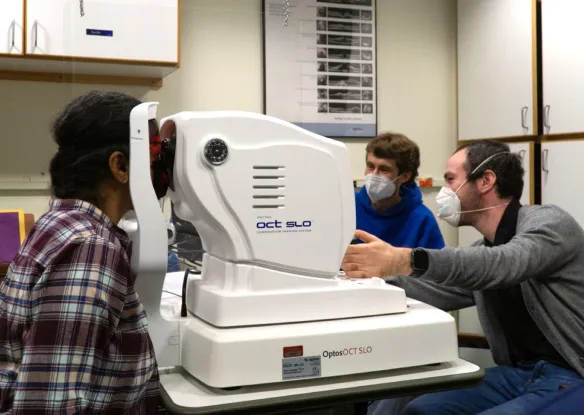

The goal of our laboratory is to develop and test assistive technology for blind and visually impaired persons that is enabled by computer vision and other sensor technologies.

Publications

Projects

- Other

- Active

- Completed

Audiom Map of Smith-Kettlewell

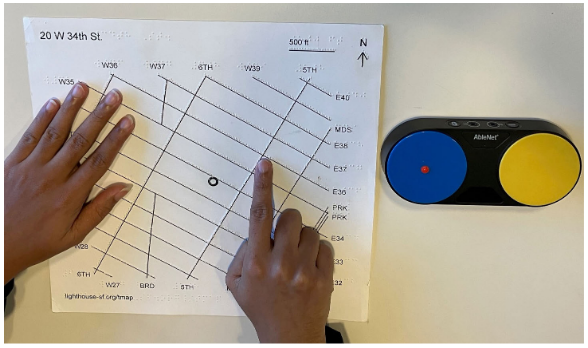

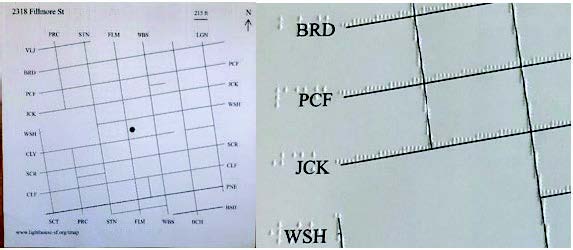

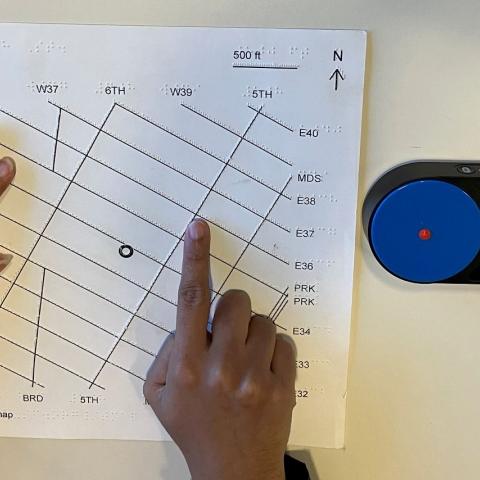

MapIO: a Gestural and Conversational Interface for Tactile Maps

Using VR to Help Train Visually Impaired Users to Aim a Camera

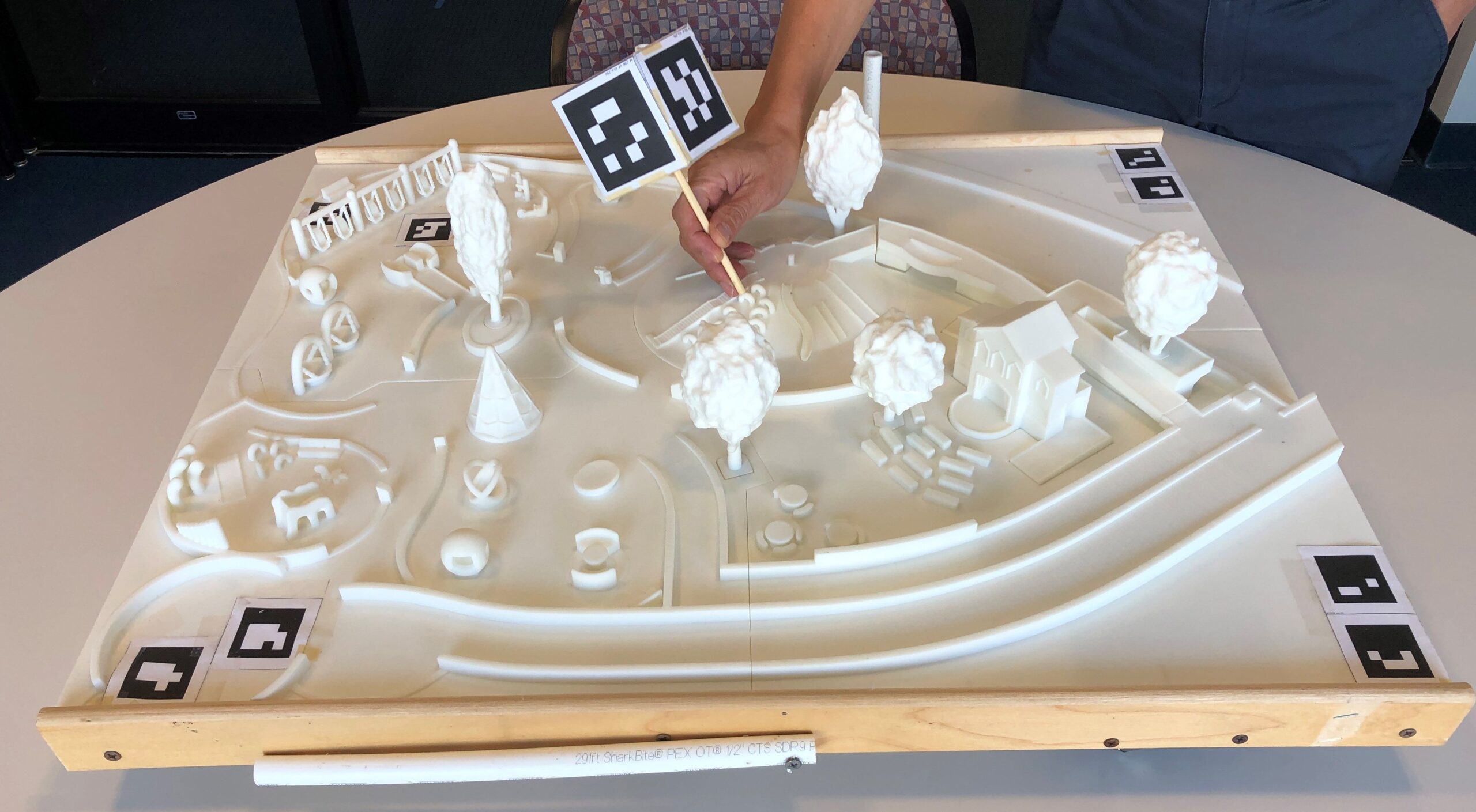

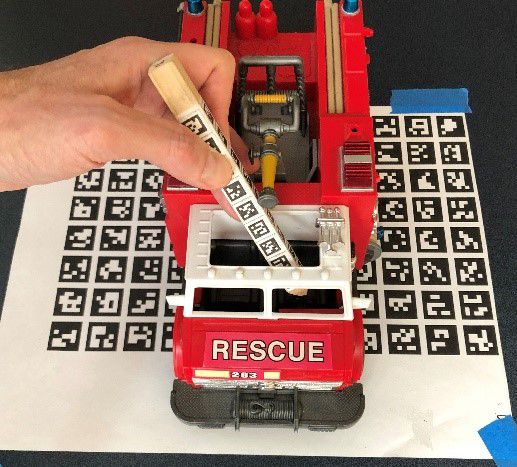

CamIO Hands

Magic Map

Outreach at Smith-Kettlewell

Audiom

Blindness and Low Vision Support Group

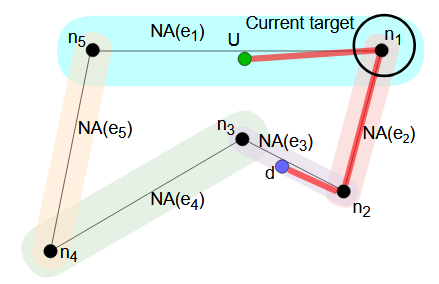

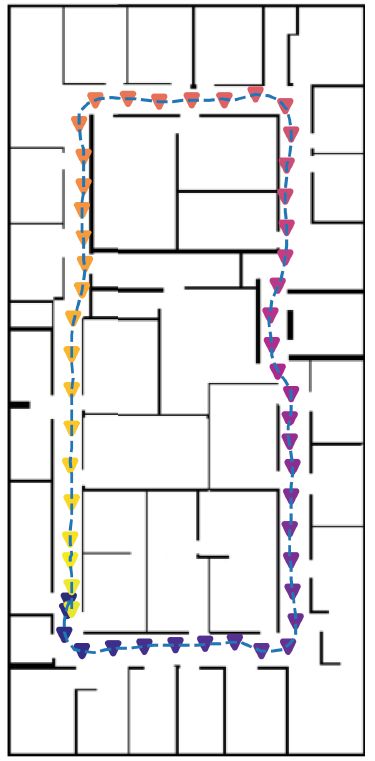

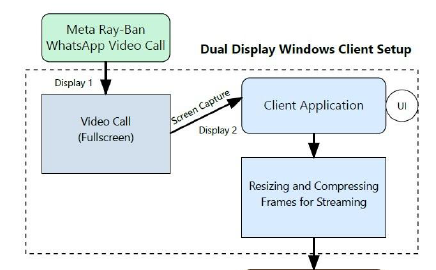

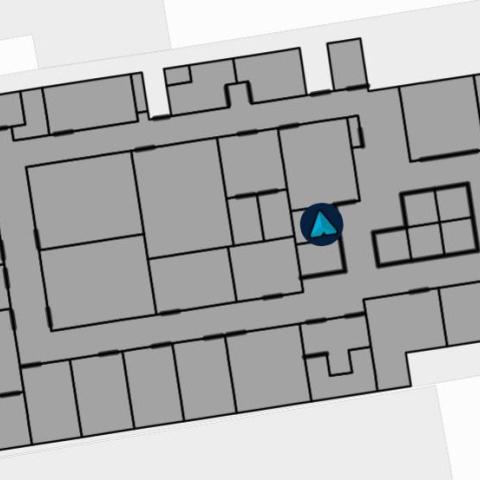

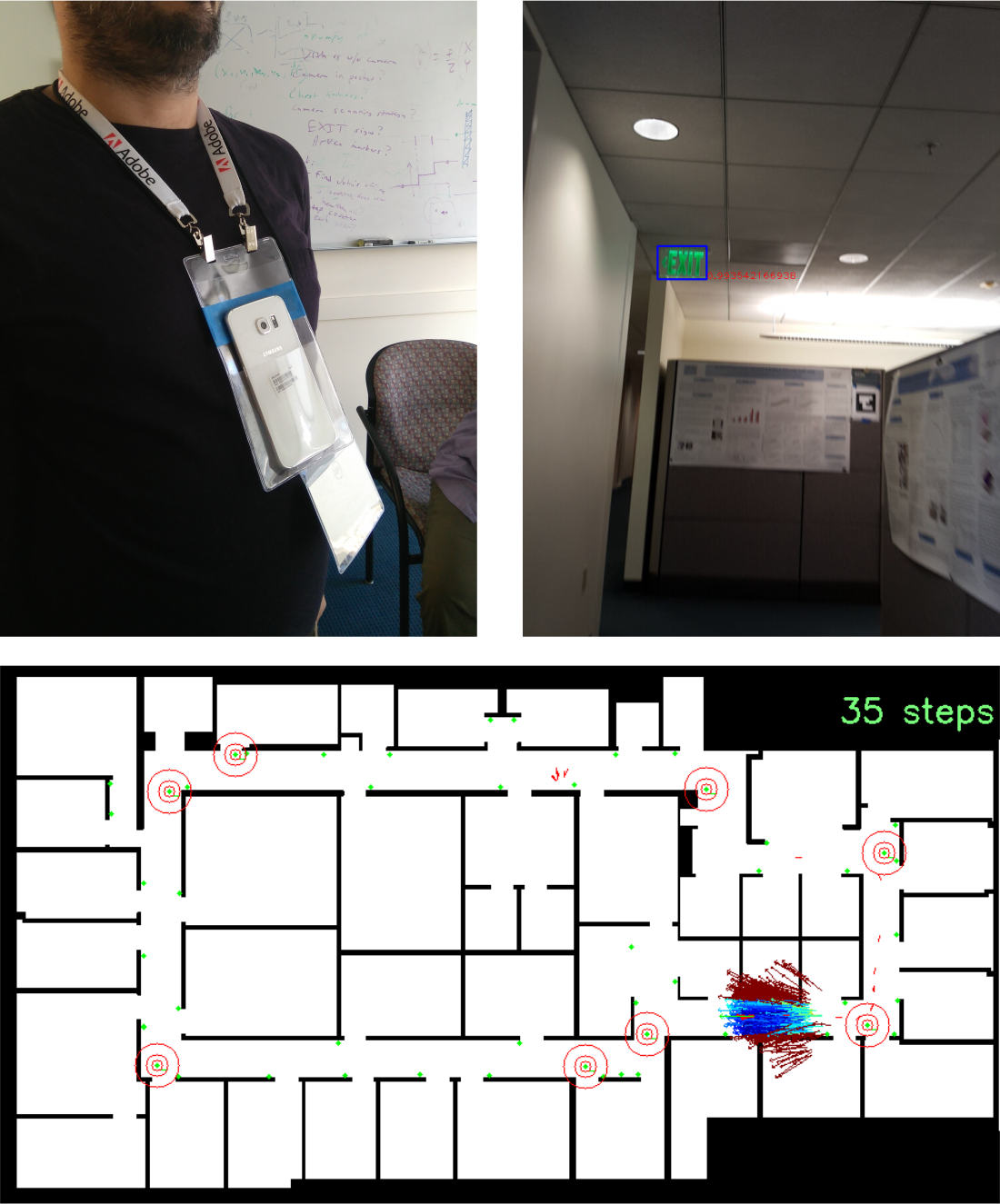

A Computer Vision-Based Indoor Wayfinding Tool

Crowd-Sourced Description for Web-Based Video (CSD)

Talking Signs

ZoomBoard: an Affordable, Portable System to Improve Access to Presentations and Lecture Notes for Low Vision Viewers

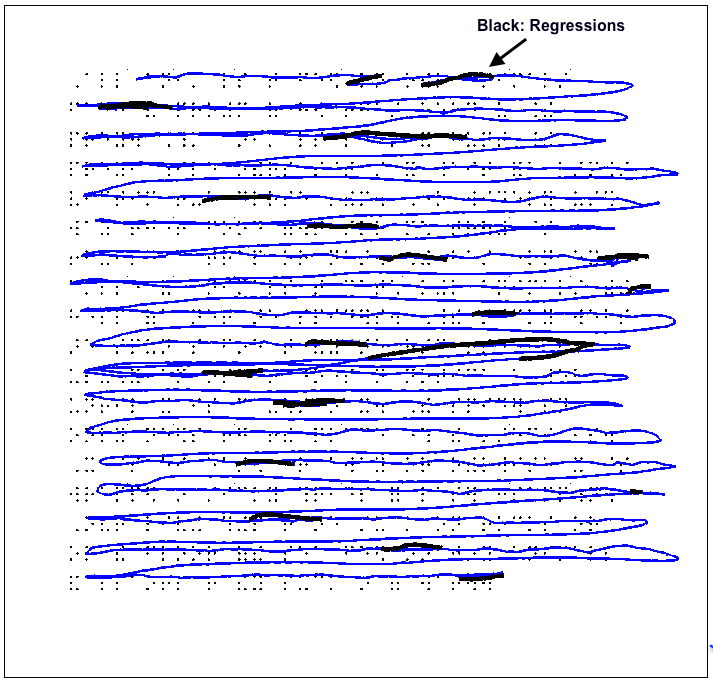

Regressions in Braille Reading

Sign Finder

Tutorials and Reference

Tactile Graphics Helper (TGH)

Workshop Series on Computer Vision and Sensor-Enabled Assistive Technology for Visual Impairment

Display Reader

BLaDE

Centers

- Rehabilitation Engineering Research CenterThe Center's research goal is to develop and apply new scientific knowledge and practical, cost-effective devices to better understand and address the real-world problems of blind, visually impaired, and deaf-blind...

People

Current People

- Charity Pitcher-CooperResearch AssociatePronouns: she/her I joined The Smith-Kettlewell Rehabilitation Engineering Research Center (RERC) team in 2017 as an assistant to scientist Dr. […]

Past People

Collaborators

Internal Collaborators

External Collaborators

- Sergio MascettiAffiliate ScientistDepartment of Computer Science, University of MilanSergio Mascetti is Associate Professor at the Department of Computer Science of the Università degli Studi di Milano where he also received his BSc, […]

News

- Oakland High School’s Students Visit Smith-KettlewellOn Friday, February 6th, students from Oakland High School's Innovative Design and Engineering Academy visited Smith-Kettlewell. Students learned about different kinds of accessibility research directly from some of our scientists, giving...

- Empowering Data Vision: A Self-Paced Introduction to the Convergence of AI and Data ScienceJuly 25, 2025 — San Francisco, CA

- SKERI Scientists Join the IGNITE STEM Fair at Burton High SchoolOn April 18, 2024 SKERI scientists enjoyed the beautiful Phillip and Sala Burton Academic High School campus while offering its students hands-on, experiential, and interactive examples of SKERI's scientists' work.

- SKERI Researchers Bring their Rehabilitation and Assistive Technology to CSUN Tech ConferenceSmith-Kettlewell’s Rehabilitation Engineering Research Center (RERC) on Blindness and Low Vision, funded by the National Institute on Disability, Independent Living and Rehabilitation Research (NIDILRR ), supports eight projects related to visual...

- CamIO Receives Supplement to Enhance Software Tools for Open ScienceDr. James Coughlan has been awarded funds to increase access to his CamIO tool for making objects accessible to blind and visually impaired persons. The funds were part of NIH's Notice of Special Interest for...

- SKERI Receives Rehabilitation Engineering Research Center (RERC) grant on Blindness and Low VisionSmith-Kettlewell is proud to announce the newly awarded Rehabilitation Engineering Research Center (RERC) grant on Blindness and Low Vision. This is a five-year grant from the National Institute on Disability,...

- SKERI Researcher talks Indoor Navigation & Mapping on Blind BargainsThe work of Dr. James Coughlan and Brandon Biggs was again recognized at the annual CSUN conference, where Brandon was interviewed for a podcast on Blind Bargains, a source for...

Events

Event Category

Event Type

Blindness and Low Vision Support Group, Year Recap (Hybrid)

Wednesday, May 20th, 2026 – 3:30 PM to 5:00 PM

Peer Discussion: Share and Grow your Blindness and Low Vision Toolbox (Hybrid)

Wednesday, April 15th, 2026 – 3:30 PM to 5:00 PM

Join us for our next support group session, facilitated by Annemarie. This is an open discussion: come ready to share...

Get Involved

If you are interested in vision science or want to learn more about low vision and blindness, there are many opportunities to get involved at The Smith-Kettlewell Eye Research Institute.