Principal Investigator:

Contact Information:

Participate in our studies! Visit Teng Lab Experiment Participation for details.

Science you can support directly!

We are running a pair of crowdfunding campaigns through the site Experiment.com. Visit the project pages or watch the videos for more details:

Project Robin: How Can Ultrasonic Wearables be Most Useful for Humans?

Understanding Brain Dynamics in Braille Reading: Letters to Language

Welcome to the Cognition, Action, and Neural Dynamics Laboratory

We aim to better understand how people perceive, interact with, and move through the world, especially when vision is unavailable. To this end, the lab studies perception and sensory processing in multiple sensory modalities, with particular interests in echolocation and braille reading in blind persons. We are also interested in mobility and navigation, including assistive technology using nonvisual cues. These are wide-ranging topics, which we approach using a combination of psychophysical, neurophysiological, engineering, and computational tools.

Spatial Perception and Navigation

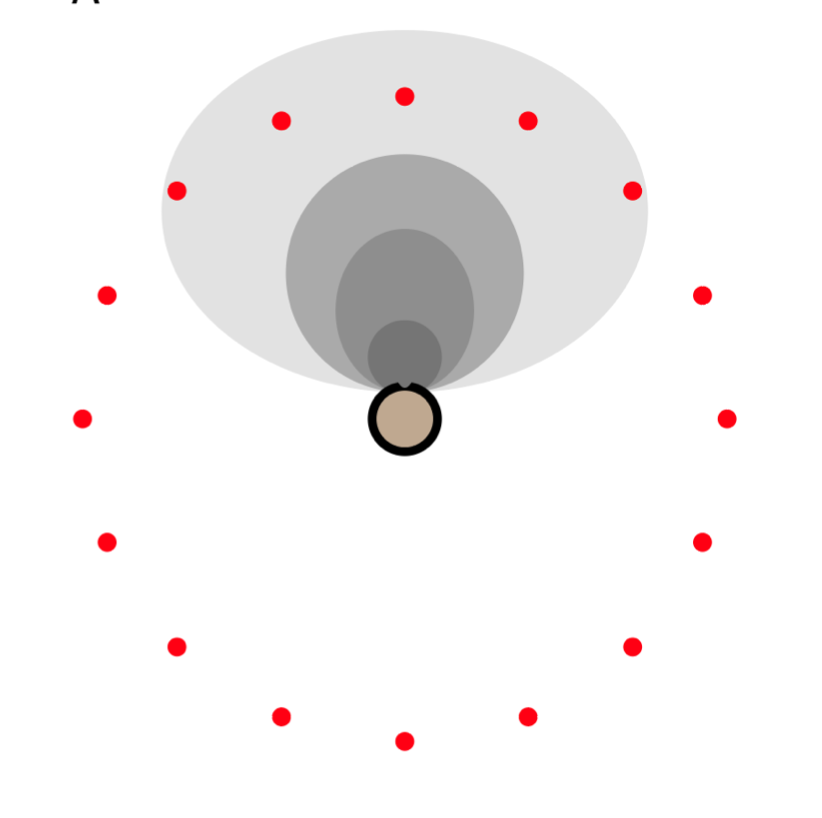

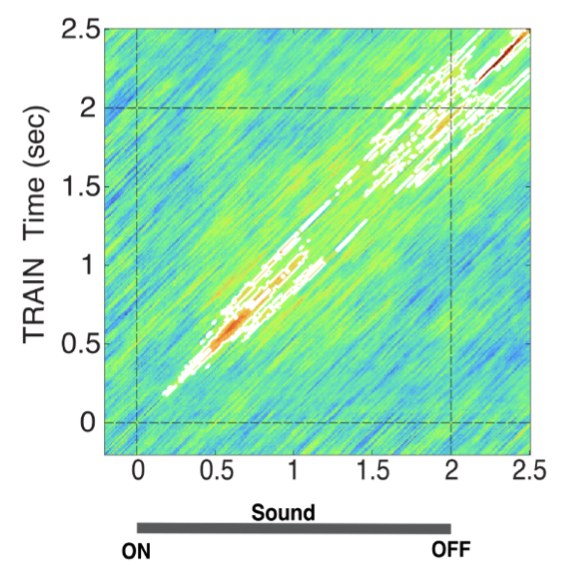

It’s critically important to have a sense of the space around us — nearby objects, obstacles, people, walls, pathways, hazards, and so on. How do we convert sensory information into that perceptual space, especially when augmenting or replacing vision? How is this information applied when actually navigating to a destination? One cue to the surrounding environment is acoustic reverberation. Sounds reflect off thousands of nearby and distant surfaces, blending together to create a signature of the size, shape, and contents of a scene. Previous research shows that our auditory systems separate reverberant backgrounds from direct sounds, guided by internal statistical models of real-world acoustics. We continue this work by asking, for example, when reverberant scene analysis develops and how it is affected by blindness.

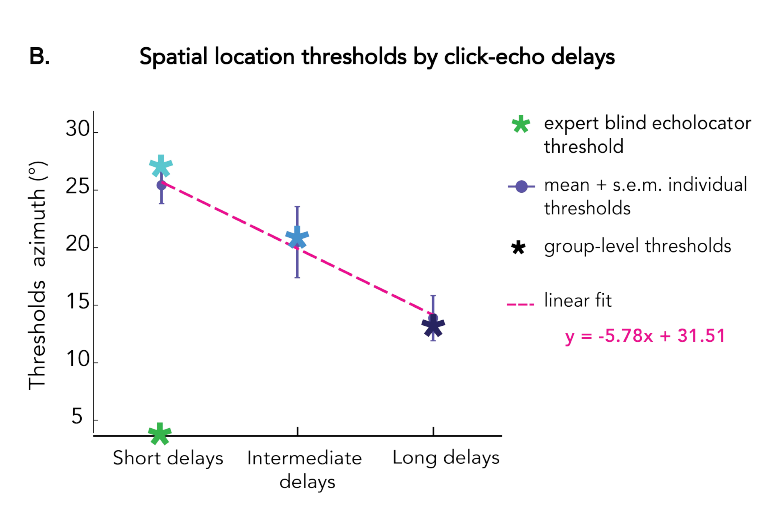

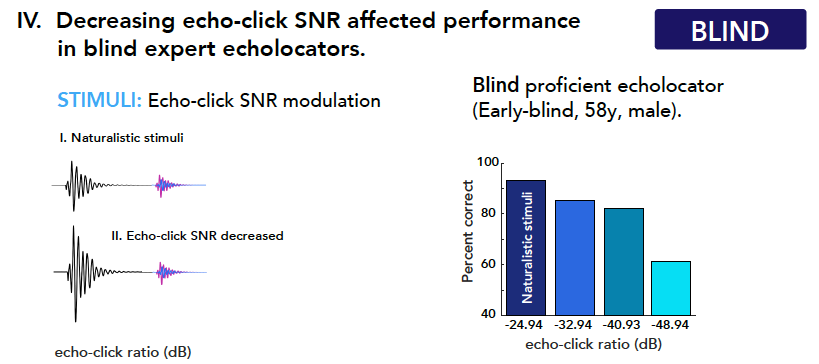

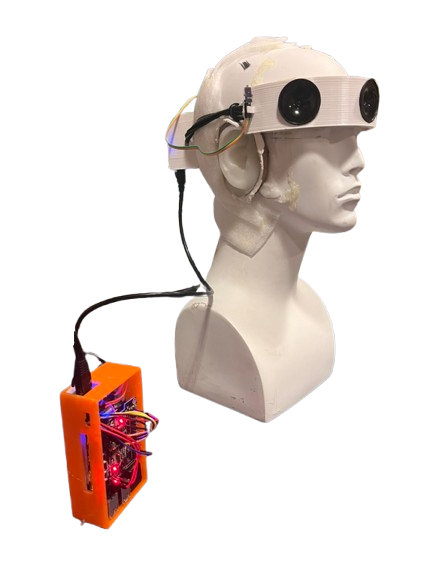

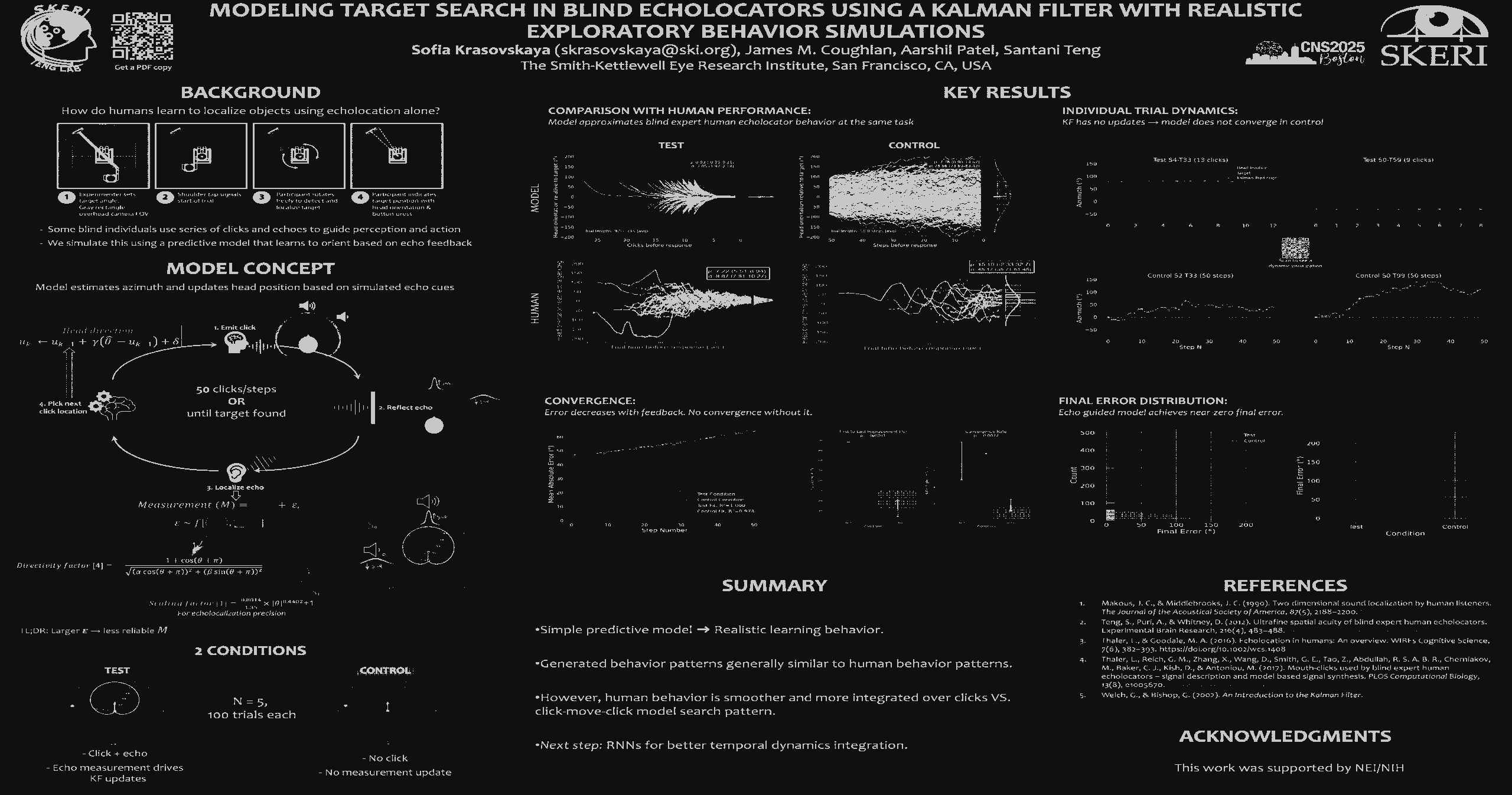

Echolocation, Braille, and Active Sensing

Sometimes, the surrounding world is too dark and silent for typical vision and hearing. This is true in deep caves, for example, or in murky water where little light penetrates. Animals living in these environments often have the ability to echolocate: They make sounds and listen for their reflections. Like turning on a flashlight in a dark room, echolocation is a way to illuminate objects and spaces actively using sound. Using tongue clicks, cane taps or footfalls, some blind humans have demonstrated an ability to use echolocation (also called sonar, or biosonar in living organisms) to sense, interact with, and move around the world. What information does echolocation afford its practitioners? What are the factors contributing to learning it? How broadly is it accessible to blind, and sighted, people?

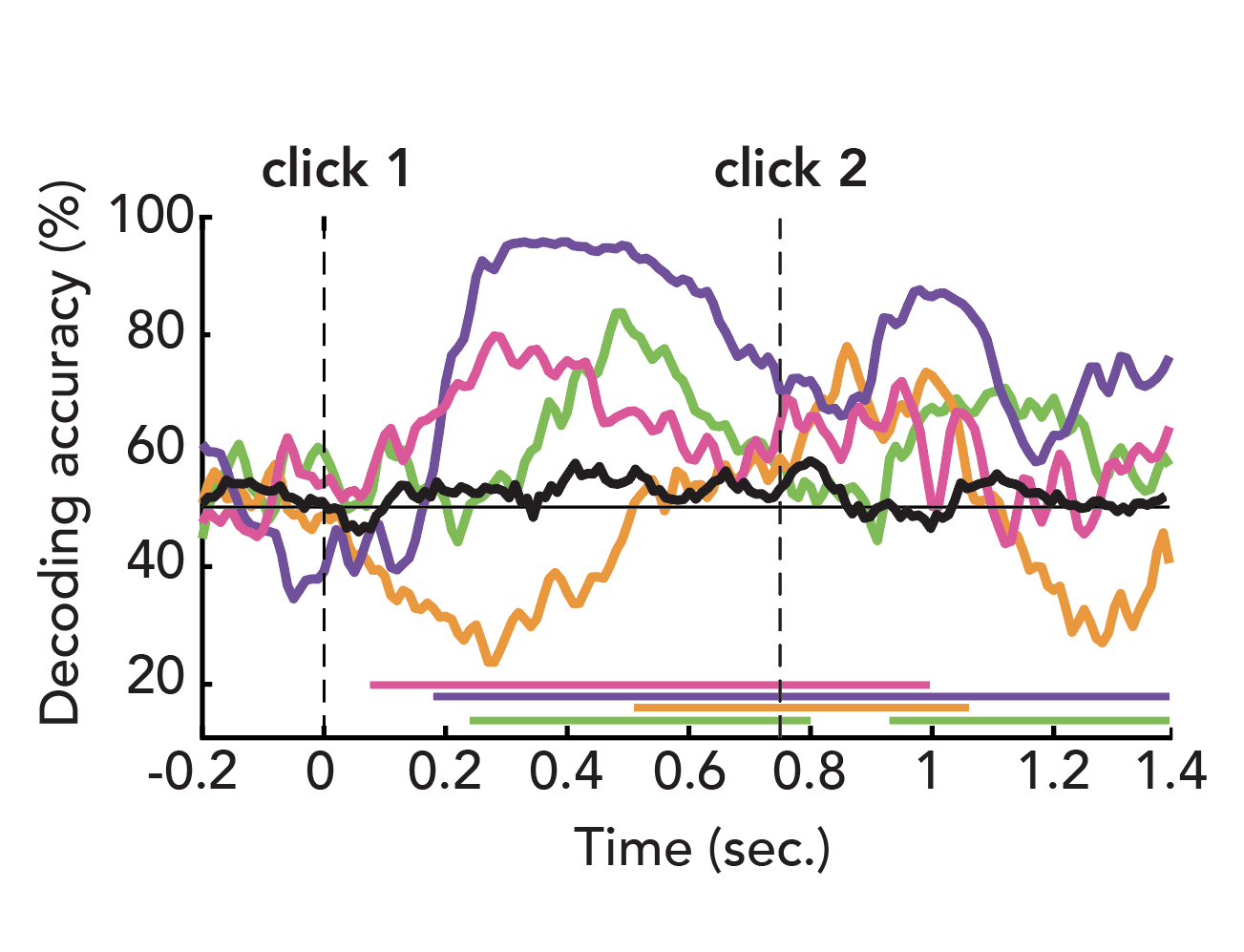

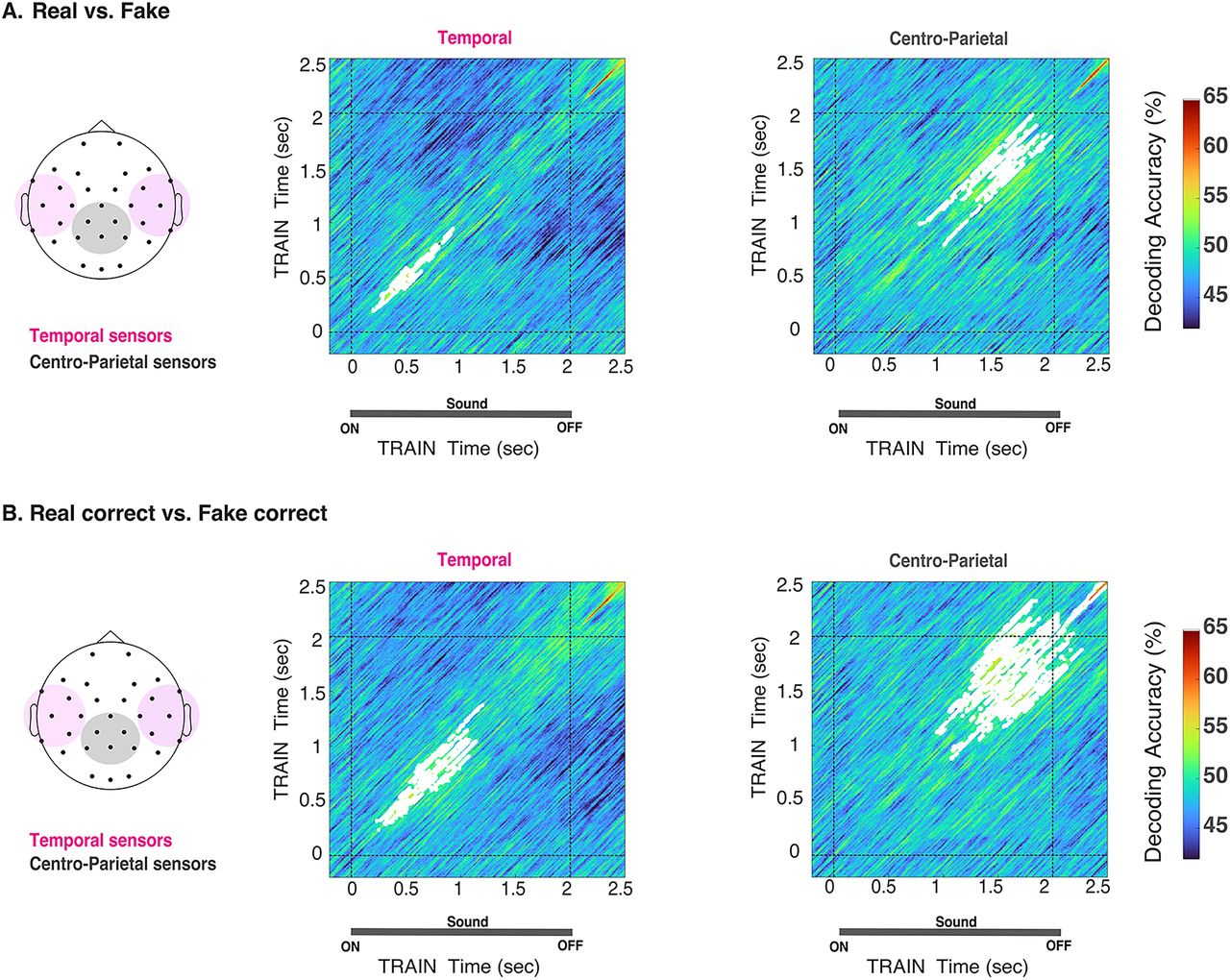

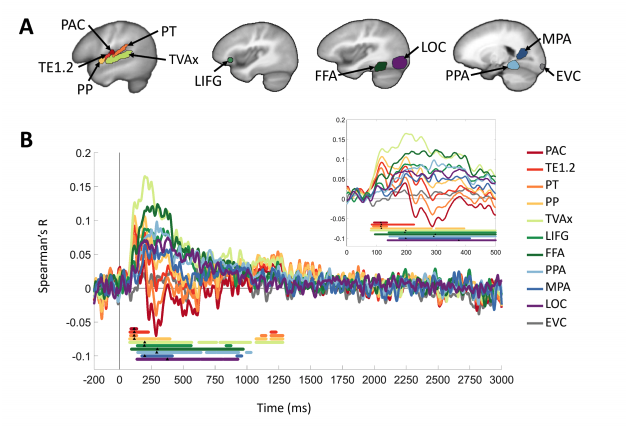

Neural Dynamics and Crossmodal Plasticity

The visual cortex does not fall silent in blindness. About half the human neocortex is thought to be devoted to visual processing. However, in blind people, who are deprived of the visual input that drives those brain regions, those areas become responsive to auditory, tactile, and other nonvisual tasks, a phenomenon called crossmodal plasticity. What kinds of computations take place under these circumstances? How do crossmodally recruited brain regions represent information? To what extent are the connections mediating this plasticity present in sighted people as well? These and many other basic questions remain debated despite decades of crossmodal plasticity research.

Want to join the lab or participate in a study?

Check here for details on any current funded or volunteer opportunities.

For inquiries, email the lab PI at santani@ski.org

Publications

Projects

- Inactive

- Active

Teng Lab Experiment Participation

Available experiments in the Cognition, Action, and Neural Dynamics Lab. Portal to signup form and description of studies in active recruitment.

Optimizing Echoacoustics: An online perceptual study (Under construction)

An online study of echoacoustic perception

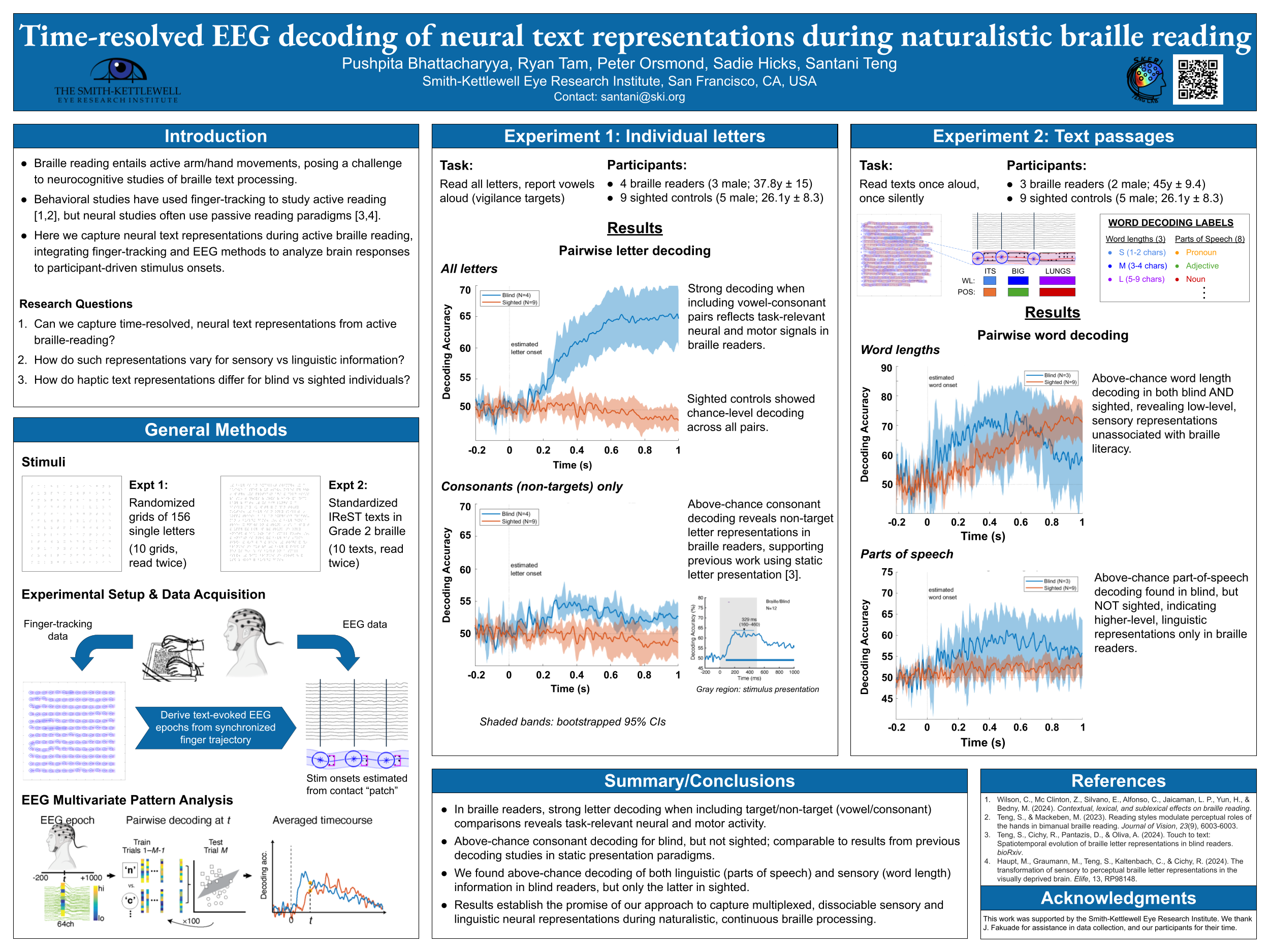

Neurodynamics of Braille Reading

[Under construction]

Neuroimaging techniques such as EEG/MEG and fMRI offer the potential to trace the propagation of Braille information through the brain as it transforms from a dot pattern to meaningful alphabetic information, and comparing this to the analogous processing stream of printed letters in sighted people.

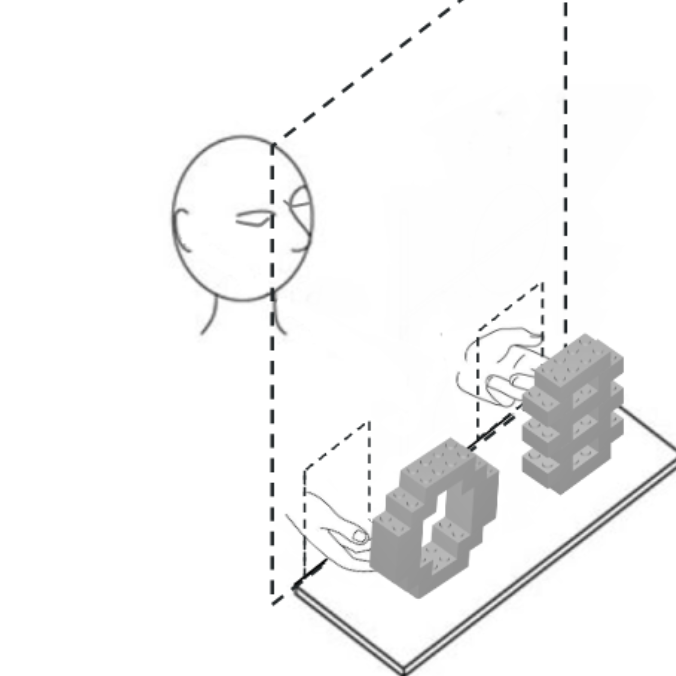

The Kinematics of Braille Reading

[Under construction]

When blind persons read braille, a system of raised dots for tactile reading and writing, how is the information processed? How do a few indentations on the fingerpads translate to linguistic information, and how does the text, in turn, influence the motions of the hands reading it? Our work on braille addresses these processes on several levels.

Hearing the World: A Remote Study of Auditory Perception

We aim to investigate the nature of auditory perception and how the brain learns rules for interpreting sounds.

Haptic Kinematics of Two-Handed Braille Reading in Blind Adults

This page (currently under construction) accompanies a work-in-progress poster at the 2020 Eurohaptics meeting.

Reverberant Auditory Scene Analysis

The world is rich in sounds and their echoes from reflecting surfaces, making acoustic reverberation a ubiquitous part of everyday life. We usually think of reverberation as a nuisance to overcome (it makes understanding speech or locating sound sources harder), but it also carries useful information, acting as a signature of the space it fills. Reverberation can tell us how big a room is, where we are inside it, and whether there are objects nearby. This has important implications not only for auditory spatial perception in typical individuals, but also in people with sensory loss. Sound…

Human Echolocation

What is echolocation? Sometimes, the surrounding world is too dark and silent for typical vision and hearing. This is true in deep caves, for example, or in murky water where little light penetrates. Animals living in these environments often have the ability to echolocate: They make sounds and listen for their reflections. Like turning on a flashlight in a dark room, echolocation is a way to illuminate objects and spaces actively using sound.

Centers

- Rehabilitation Engineering Research CenterThe Center's research goal is to develop and apply new scientific knowledge and practical, cost-effective devices to better understand and address the real-world problems of blind, visually impaired, and deaf-blind...

People

Current People

Past People

Fundings

Collaborators

Internal Collaborators

External Collaborators

News

- Oakland High School’s Students Visit Smith-KettlewellOn Friday, February 6th, students from Oakland High School's Innovative Design and Engineering Academy visited Smith-Kettlewell. Students learned about different kinds of accessibility research directly from some of our scientists, giving...

- Smith-Kettlewell Eye Research Institute to Illuminate Adaptive Strategies in Vision Impairment at IMRF SymposiumThe Smith-Kettlewell Eye Research Institute played a key role at this year's International Multisensory Research Forum (IMRF) in Reno, Nevada, beginning June 17, 2024. An all-SKERI symposium "Shifting Sensory Reliance:...

- SKERI Scientists Present Groundbreaking Research at the 24th Annual Vision Sciences Society MeetingThe Smith-Kettlewell Eye Research Institute (SKERI) is proud to announce its extensive participation in the 24th Annual Meeting of the Vision Sciences Society (VSS), held from May 17 to May...

- SKERI Postdoc Haydée García-Lázaro receives the Elsevier Vision Research Travel AwardCongratulations to Dr. Haydée García-Lázaro for receiving the Elsevier Vision Research Travel Award to attend the Vision Sciences Society (VSS) Conference.

- SKERI Scientists Join the IGNITE STEM Fair at Burton High SchoolOn April 18, 2024 SKERI scientists enjoyed the beautiful Phillip and Sala Burton Academic High School campus while offering its students hands-on, experiential, and interactive examples of SKERI's scientists' work.

- SKERI Researchers Bring their Rehabilitation and Assistive Technology to CSUN Tech ConferenceSmith-Kettlewell’s Rehabilitation Engineering Research Center (RERC) on Blindness and Low Vision, funded by the National Institute on Disability, Independent Living and Rehabilitation Research (NIDILRR ), supports eight projects related to visual...

Events

Event Category

Event Type

No upcoming events found.

Get Involved

If you are interested in vision science or want to learn more about low vision and blindness, there are many opportunities to get involved at The Smith-Kettlewell Eye Research Institute.